7個非常經(jīng)典的 Python爬蟲 案例(附源碼)

原文鏈接:https://blog.csdn.net/m0_64336780/article/details/127454511

本次的7個python爬蟲小案例涉及到了re正則、xpath、beautiful soup、selenium等知識點,非常適合剛?cè)腴Tpython爬蟲的小伙伴參考學習。注:若涉及到版權或隱私問題,請及時聯(lián)系我刪除即可。

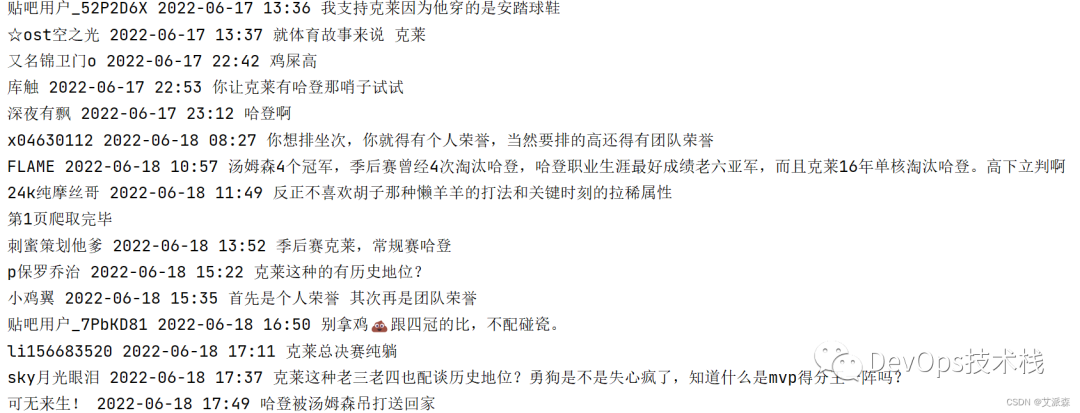

本次選取的是某吧中的NBA吧中的一篇帖子,帖子標題是“克萊和哈登,誰歷史地位更高”。爬取的目標是帖子里面的回復內(nèi)容。

源程序和關鍵結(jié)果截圖:

-

url = f'https://tieba.baidu.com/p/7882177660?pn={page}'

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36'

resp = requests.get(url,headers=headers)

comments = re.findall('style="display:;"> (.*?)</div>',html)

users = re.findall('class="p_author_name j_user_card" href=".*?" target="_blank">(.*?)</a>',html)

comment_times = re.findall('樓</span><span class="tail-info">(.*?)</span><div',html)for u,c,t in zip(users,comments,comment_times):

if 'img' in c or 'div' in c or len(u)>50:csvwriter.writerow((u,t,c))

if __name__ == '__main__':with open('01.csv','a',encoding='utf-8')as f:csvwriter = csv.writer(f)csvwriter.writerow(('評論用戶','評論時間','評論內(nèi)容'))

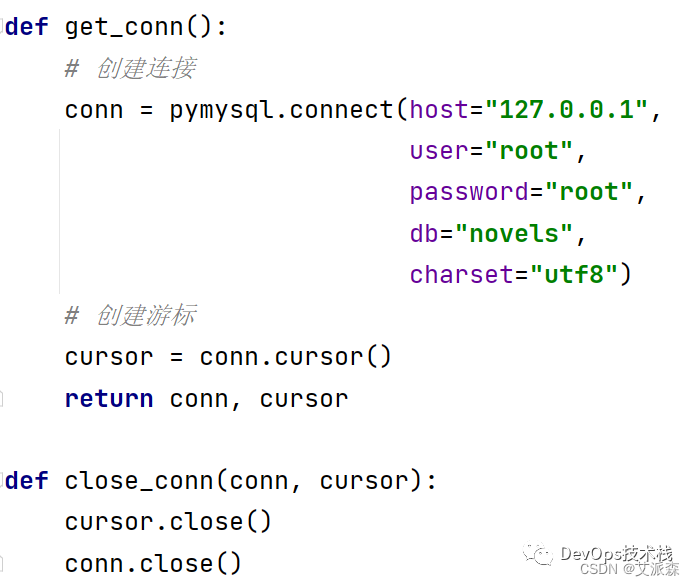

本次選取的小說網(wǎng)址是某小說網(wǎng),這里我們選取第一篇小說進行爬取

然后通過分析網(wǎng)頁源代碼分析每章小說的鏈接

找到鏈接的位置后,我們使用Xpath來進行鏈接和每一章標題的提取

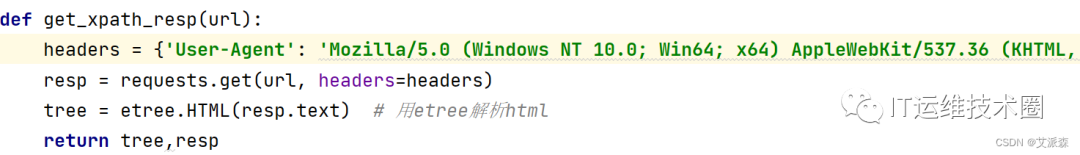

在這里,因為涉及到多次使用requests發(fā)送請求,所以這里我們把它封裝成一個函數(shù),便于后面的使用

每一章的鏈接獲取后,我們開始進入小說章節(jié)內(nèi)容頁面進行分析

通過網(wǎng)頁分析,小說內(nèi)容都在網(wǎng)頁源代碼中,屬于靜態(tài)數(shù)據(jù)

這里我們選用re正則表達式進行數(shù)據(jù)提取,并對最后的結(jié)果進行清洗

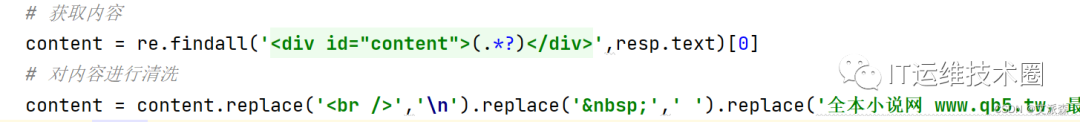

然后我們需要將數(shù)據(jù)保存到數(shù)據(jù)庫中,這里我將爬取的數(shù)據(jù)存儲到mysql數(shù)據(jù)庫中,先封住一下數(shù)據(jù)庫的操作

接著將爬取到是數(shù)據(jù)進行保存

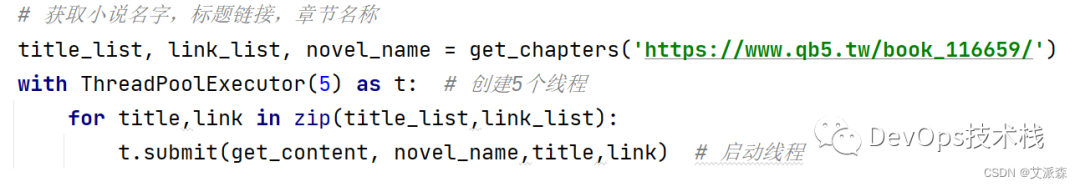

最后一步就是使用多線程來提高爬蟲效率,這里我們創(chuàng)建了5個線程的線程池

源代碼及結(jié)果截圖:

-

from concurrent.futures import ThreadPoolExecutor

conn = pymysql.connect(host="127.0.0.1",

def close_conn(conn, cursor):

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36'}resp = requests.get(url, headers=headers)tree = etree.HTML(resp.text)tree,_ = get_xpath_resp(url)novel_name = tree.xpath('//*[@id="info"]/h1/text()')[0]dds = tree.xpath('/html/body/div[4]/dl/dd')

title = d.xpath('./a/text()')[0]

link = d.xpath('./a/@href')[0]

link_list.append(chapter_url)return title_list,link_list,novel_name

def get_content(novel_name,title,url):

conn, cursor = get_conn()sql = 'INSERT INTO novel(novel_name,chapter_name,content) VALUES(%s,%s,%s)'tree,resp = get_xpath_resp(url)content = re.findall('<div id="content">(.*?)</div>',resp.text)[0]content = content.replace('<br />','\n').replace(' ',' ').replace('全本小說網(wǎng) www.qb5.tw,最快更新<a )cursor.execute(sql,[novel_name,title,content])

if __name__ == '__main__':

title_list, link_list, novel_name = get_chapters('https://www.qb5.tw/book_116659/')with ThreadPoolExecutor(5) as t:for title,link in zip(title_list,link_list):t.submit(get_content, novel_name,title,link)

3. 分別使用XPath和Beautiful Soup4兩種方式爬取并保存非異步加載的“某瓣某排行榜”如https://movie.douban.com/top250的名稱、描述、評分和評價人數(shù)等數(shù)據(jù)。

先分析:

首先,來到某瓣Top250頁面,首先使用Xpath版本的來抓取數(shù)據(jù),先分析下電影列表頁的數(shù)據(jù)結(jié)構(gòu),發(fā)下都在網(wǎng)頁源代碼中,屬于靜態(tài)數(shù)據(jù)

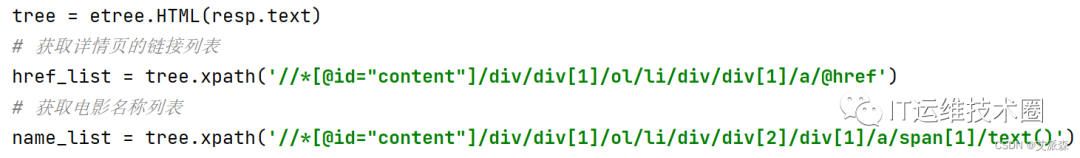

接著我們找到數(shù)據(jù)的規(guī)律,使用xpath提取每一個電影的鏈接及電影名

然后根據(jù)鏈接進入到其詳情頁

分析詳情頁的數(shù)據(jù),發(fā)現(xiàn)也是靜態(tài)數(shù)據(jù),繼續(xù)使用xpath提取數(shù)據(jù)

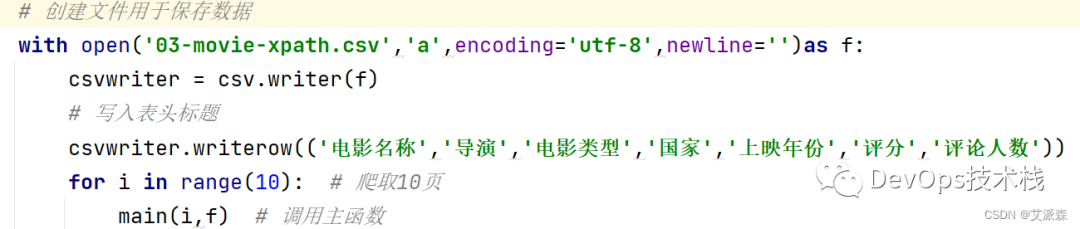

最后我們將爬取的數(shù)據(jù)進行存儲,這里用csv文件進行存儲

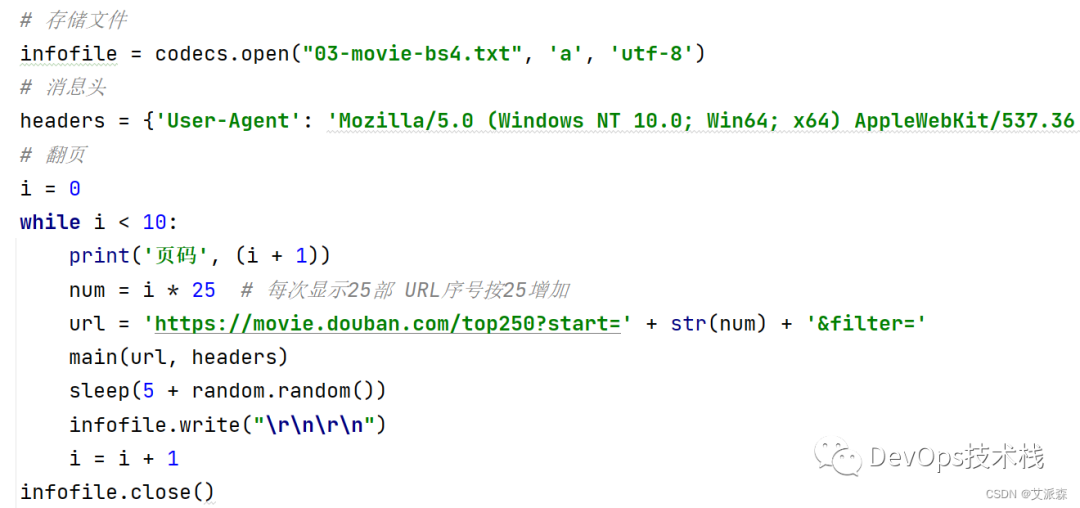

接著是Beautiful Soup4版的,在這里,我們直接在電影列表頁使用bs4中的etree進行數(shù)據(jù)提取

最后,同樣使用csv文件進行數(shù)據(jù)存儲

源代碼即結(jié)果截圖:

XPath版:

url = f'https://movie.douban.com/top250?start={page*25}&filter='headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/99.0.4844.35 Safari/537.36',}resp = requests.get(url,headers=headers)tree = etree.HTML(resp.text)

href_list = tree.xpath('//*[@id="content"]/div/div[1]/ol/li/div/div[1]/a/@href')

name_list = tree.xpath('//*[@id="content"]/div/div[1]/ol/li/div/div[2]/div[1]/a/span[1]/text()')for url,name in zip(href_list,name_list):sleep(1 + random.random())

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/99.0.4844.35 Safari/537.36','Host': 'movie.douban.com',

resp = requests.get(url,headers=headers)

dir = tree.xpath('//*[@id="info"]/span[1]/span[2]/a/text()')[0]

type_ = re.findall(r'property="v:genre">(.*?)</span>',html)

country = re.findall(r'地區(qū):</span> (.*?)<br',html)[0]

time = tree.xpath('//*[@id="content"]/h1/span[2]/text()')[0]

rate = tree.xpath('//*[@id="interest_sectl"]/div[1]/div[2]/strong/text()')[0]

people = tree.xpath('//*[@id="interest_sectl"]/div[1]/div[2]/div/div[2]/a/span/text()')[0]print(name,dir,type_,country,time,rate,people)csvwriter.writerow((name,dir,type_,country,time,rate,people))

if __name__ == '__main__':

with open('03-movie-xpath.csv','a',encoding='utf-8',newline='')as f:csvwriter = csv.writer(f)

csvwriter.writerow(('電影名稱','導演','電影類型','國家','上映年份','評分','評論人數(shù)'))

sleep(3 + random.random())

Beautiful Soup4版:

-

from bs4 import BeautifulSoup

page = urllib.request.Request(url, headers=headers)page = urllib.request.urlopen(page)

soup = BeautifulSoup(contents, "html.parser")

for tag in soup.find_all(attrs={"class": "item"}):

num = tag.find('em').get_text()

infofile.write(num + "\r\n")

name = tag.find_all(attrs={"class": "title"})zwname = name[0].get_text()

infofile.write("[中文名稱]" + zwname + "\r\n")

url_movie = tag.find(attrs={"class": "hd"}).aurls = url_movie.attrs['href']

infofile.write("[網(wǎng)頁鏈接]" + urls + "\r\n")

info = tag.find(attrs={"class": "star"}).get_text()info = info.replace('\n', ' ')

info = tag.find(attrs={"class": "inq"})

content = info.get_text()

infofile.write(u"[影評]" + content + "\r\n")

if __name__ == '__main__':

infofile = codecs.open("03-movie-bs4.txt", 'a', 'utf-8')

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/67.0.3396.99 Safari/537.36'}

url = 'https://movie.douban.com/top250?start=' + str(num) + '&filter='

sleep(5 + random.random())infofile.write("\r\n\r\n")

4.實現(xiàn)某東商城某商品評論數(shù)據(jù)的爬取(評論數(shù)據(jù)不少于100條,包括評論內(nèi)容、時間和評分)。

先分析:

本次選取的某東官網(wǎng)的一款聯(lián)想筆記本電腦,數(shù)據(jù)為動態(tài)加載的,通過開發(fā)者工具抓包分析即可。

源代碼及結(jié)果截圖:

-

url = 'https://club.jd.com/comment/productPageComments.action'

'productId': 100011483893,

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/99.0.4844.35 Safari/537.36','referer': 'https://item.jd.com/'

resp = requests.get(url,params=params,headers=headers).json()comments = resp['comments']

content = comment['content']content = content.replace('\n','')comment_time = comment['creationTime']

print(score,comment_time,content)csvwriter.writerow((score,comment_time,content))

if __name__ == '__main__':with open('04.csv','a',encoding='utf-8',newline='')as f:csvwriter = csv.writer(f)csvwriter.writerow(('評分','評論時間','評論內(nèi)容'))

5. 實現(xiàn)多種方法模擬登錄某乎,并爬取與一個與江漢大學有關問題和答案。

首先使用selenium打開某乎登錄頁面,接著使用手機進行二維碼掃描登錄

進入頁面后,打開開發(fā)者工具,找到元素,,定位輸入框,輸入漢江大學,然后點擊搜索按鈕

以第二條帖子為例,進行元素分析 。

源代碼及結(jié)果截圖:

-

from selenium.webdriver.chrome.service import Servicefrom selenium.webdriver import Chrome,ChromeOptionsfrom selenium.webdriver.common.by import By

warnings.filterwarnings("ignore")

service = Service('chromedriver.exe')options = ChromeOptions()

options.add_experimental_option('excludeSwitches', ['enable-automation','enable-logging'])options.add_experimental_option('useAutomationExtension', False)

driver = Chrome(service=service,options=options)

driver.execute_cdp_cmd("Page.addScriptToEvaluateOnNewDocument", {

Object.defineProperty(navigator, 'webdriver', {

driver.get('https://www.zhihu.com/')

driver.find_element(By.ID,'Popover1-toggle').click()

driver.find_element(By.ID,'Popover1-toggle').send_keys('漢江大學')

driver.find_element(By.XPATH,'//*[@id="root"]/div/div[2]/header/div[2]/div[1]/div/form/div/div/label/button').click()

driver.implicitly_wait(20)

title = driver.find_element(By.XPATH,'//*[@id="SearchMain"]/div/div/div/div/div[2]/div/div/div/h2/div/a/span').text

driver.find_element(By.XPATH,'//*[@id="SearchMain"]/div/div/div/div/div[2]/div/div/div/div/span/div/button').click()

content = driver.find_element(By.XPATH,'//*[@id="SearchMain"]/div/div/div/div/div[2]/div/div/div/div/span[1]/div/span/p').text

driver.find_element(By.XPATH,'//*[@id="SearchMain"]/div/div/div/div/div[2]/div/div/div/div/div[3]/div/div/button[1]').click()

driver.find_element(By.XPATH,'//*[@id="SearchMain"]/div/div/div/div/div[2]/div/div/div/div[2]/div/div/div[2]/div[2]/div/div[3]/button').click()

divs = driver.find_elements(By.XPATH,'/html/body/div[6]/div/div/div[2]/div/div/div/div[2]/div[3]/div')

comment = div.find_element(By.XPATH,'./div/div/div[2]').text

if __name__ == '__main__':

with open('05.txt','a',encoding='utf-8')as f:

6. 綜合利用所學知識,爬取某個某博用戶前5頁的微博內(nèi)容。

這里我們選取了人民日報的微博內(nèi)容進行爬取,具體頁面我就不放這了,怕違規(guī)。

源代碼及結(jié)果截圖:

-

url = f'https://weibo.com/ajax/statuses/mymblog?uid=2803301701&page={page}&feature=0&since_id=4824543023860882kp{page}'

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36','cookie':'SINAGLOBAL=6330339198688.262.1661412257300; ULV=1661412257303:1:1:1:6330339198688.262.1661412257300:; PC_TOKEN=8b935a3a6e; SUBP=0033WrSXqPxfM725Ws9jqgMF55529P9D9WWoQDW1G.Vsux_WIbm9NsCq5JpX5KMhUgL.FoMNShMN1K5ESKq2dJLoIpjLxKnL1h.LB.-LxKqLBoBLB.-LxKqLBKeLB--t; ALF=1697345086; SSOLoginState=1665809086; SCF=Auy-TaGDNaCT06C4RU3M3kQ0-QgmTXuo9D79pM7HVAjce1K3W92R1-fHAP3gXR6orrHK_FSwDsodoGTj7nX_1Hw.; SUB=_2A25OTkruDeRhGeFJ71UW-S7OzjqIHXVtOjsmrDV8PUNbmtANLVKmkW9Nf9yGtaKedmyOsDKGh84ivtfHMGwvRNtZ; XSRF-TOKEN=LK4bhZJ7sEohF6dtSwhZnTS4; WBPSESS=PfYjpkhjwcpEXrS7xtxJwmpyQoHWuGAMhQkKHvr_seQNjwPPx0HJgSgqWTZiNRgDxypgeqzSMsbVyaDvo7ng6uTdC9Brt07zYoh6wXXhQjMtzAXot-tZzLRlW_69Am82CXWOFfcvM4AzsWlAI-6ZNA=='

resp = requests.get(url,headers=headers)data_list = resp.json()['data']['list']

created_time = item['created_at']author = item['user']['screen_name']

reposts_count = item['reposts_count']comments_count = item['comments_count']attitudes_count = item['attitudes_count']csvwriter.writerow((created_time,author,title,reposts_count,comments_count,attitudes_count))print(created_time,author,title,reposts_count,comments_count,attitudes_count)

if __name__ == '__main__':

with open('06-2.csv','a',encoding='utf-8',newline='')as f:csvwriter = csv.writer(f)

csvwriter.writerow(('發(fā)布時間','發(fā)布作者','帖子標題','轉(zhuǎn)發(fā)數(shù)','評論數(shù)','點贊數(shù)'))

7.自選一個熱點或者你感興趣的主題,爬取數(shù)據(jù)并進行簡要數(shù)據(jù)分析(例如,通過爬取電影的名稱、類型、總票房等數(shù)據(jù)統(tǒng)計分析不同類型電影的平均票房,十年間每年票房冠軍的票房走勢等;通過爬取中國各省份地區(qū)人口數(shù)量,統(tǒng)計分析我國人口分布等)。

本次選取的網(wǎng)址是藝恩娛數(shù),目標是爬取里面的票房榜數(shù)據(jù),通過開發(fā)者工具抓包分析找到數(shù)據(jù)接口,然后開始編寫代碼進行數(shù)據(jù)抓取。

源代碼及結(jié)果截圖:

-

import matplotlib.pyplot as plt

warnings.filterwarnings('ignore')plt.rcParams['font.sans-serif'] = ['SimHei']plt.rcParams['axes.unicode_minus'] = False

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/105.0.0.0 Safari/537.36',}

'r': '0.9936776079863086',

resp = requests.post('https://ys.endata.cn/enlib-api/api/home/getrank_mainland.do', headers=headers, data=data)data_list = resp.json()['data']['table0']

MovieName = item['MovieName']ReleaseTime = item['ReleaseTime']TotalPrice = item['BoxOffice']AvgPrice = item['AvgBoxOffice']AvgAudienceCount = item['AvgAudienceCount']

csvwriter.writerow((rank,MovieName,ReleaseTime,TotalPrice,AvgPrice,AvgAudienceCount))print(rank,MovieName,ReleaseTime,TotalPrice,AvgPrice,AvgAudienceCount)

data = pd.read_csv('07.csv')

data['年份'] = data['上映時間'].apply(lambda x: x.split('-')[0])

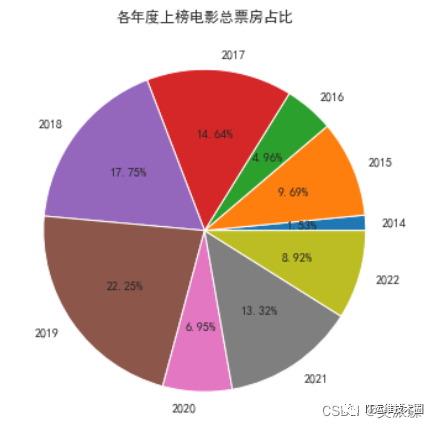

df1 = data.groupby('年份')['總票房(萬)'].sum()plt.figure(figsize=(6, 6))plt.pie(df1, labels=df1.index.to_list(), autopct='%1.2f%%')plt.title('各年度上榜電影總票房占比')

df1 = data.groupby('年份')['總票房(萬)'].sum()plt.figure(figsize=(6, 6))plt.plot(df1.index.to_list(), df1.values.tolist())plt.title('各年度上榜電影總票房趨勢')

print(data.sort_values(by='平均票價', ascending=False)[['年份', '電影名稱', '平均票價']].head(10))

print(data.sort_values(by='平均場次', ascending=False)[['年份', '電影名稱', '平均場次']].head(10))

if __name__ == '__main__':

with open('07.csv', 'w', encoding='utf-8',newline='') as f:csvwriter = csv.writer(f)

csvwriter.writerow(('排名', '電影名稱', '上映時間', '總票房(萬)', '平均票價', '平均場次'))

從年度上榜電影票房占比來看,2019年占比最高,說明2019年這一年的電影質(zhì)量都很不錯,上榜電影多而且票房高。

從趨勢來看,從2016年到2019年,上榜電影總票房一直在增長,到2019年達到頂峰,說明這一年電影是非常的火爆,但是從2020年急劇下滑,最大的原因應該是這一年年初開始爆發(fā)疫情,導致賀歲檔未初期上映,而且由于疫情影響,電影院一直處于關閉狀態(tài),所以這一年票房慘淡。

往期推薦

-

小孩也能學會的 Kubernetes 繪本教程

-

優(yōu)秀的 Shell 運維腳本鑒賞 -

阿里 Nacos 高可用集群部署 -

神器 Nginx 的學習手冊 ( 建議收藏 ) -

K8S 常用資源 YAML 詳解 -

DevOps與CI/CD常見面試問題匯總

-

我會在Docker容器中抓包了! -

19 個 K8S集群常見問題總結(jié),建議收藏 -

9 個實用 Shell 腳本,建議收藏! -

詳解 K8S Helm CI/CD發(fā)布流程 -

一臺服務器最大能支持多少條TCP連接? -

K8S運維必知必會的 Kubectl 命令總結(jié)

-

16 張圖硬核講解 Kubernetes 網(wǎng)絡

-

史上最全 Jenkins Pipeline流水線詳解 -

主流監(jiān)控系統(tǒng) Prometheus 學習指南

點亮,服務器三年不宕機