面試官:Redis 單線程已經(jīng)很快,為何 6.0要引入多線程?有啥優(yōu)勢?

作者:Java斗帝之路

鏈接:https://www.jianshu.com/p/ba2f082ff668

Redis作為一個(gè)基于內(nèi)存的緩存系統(tǒng),一直以高性能著稱,因沒有上下文切換以及無鎖操作,即使在單線程處理情況下,讀速度仍可達(dá)到11萬次/s,寫速度達(dá)到8.1萬次/s。但是,單線程的設(shè)計(jì)也給Redis帶來一些問題:

只能使用CPU一個(gè)核; 如果刪除的鍵過大(比如Set類型中有上百萬個(gè)對象),會導(dǎo)致服務(wù)端阻塞好幾秒; QPS難再提高。

針對上面問題,Redis在4.0版本以及6.0版本分別引入了Lazy Free以及多線程IO,逐步向多線程過渡,下面將會做詳細(xì)介紹。

單線程原理

都說Redis是單線程的,那么單線程是如何體現(xiàn)的?如何支持客戶端并發(fā)請求的?為了搞清這些問題,首先來了解下Redis是如何工作的。

Redis服務(wù)器是一個(gè)事件驅(qū)動程序,服務(wù)器需要處理以下兩類事件:

文件事件:

Redis服務(wù)器通過套接字與客戶端(或者其他Redis服務(wù)器)進(jìn)行連接,而文件事件就是服務(wù)器對套接字操作的抽象;服務(wù)器與客戶端的通信會產(chǎn)生相應(yīng)的文件事件,而服務(wù)器則通過監(jiān)聽并處理這些事件來完成一系列網(wǎng)絡(luò)通信操作,比如連接accept,read,write,close等;

時(shí)間事件:

Redis服務(wù)器中的一些操作(比如serverCron函數(shù))需要在給定的時(shí)間點(diǎn)執(zhí)行,而時(shí)間事件就是服務(wù)器對這類定時(shí)操作的抽象,比如過期鍵清理,服務(wù)狀態(tài)統(tǒng)計(jì)等。

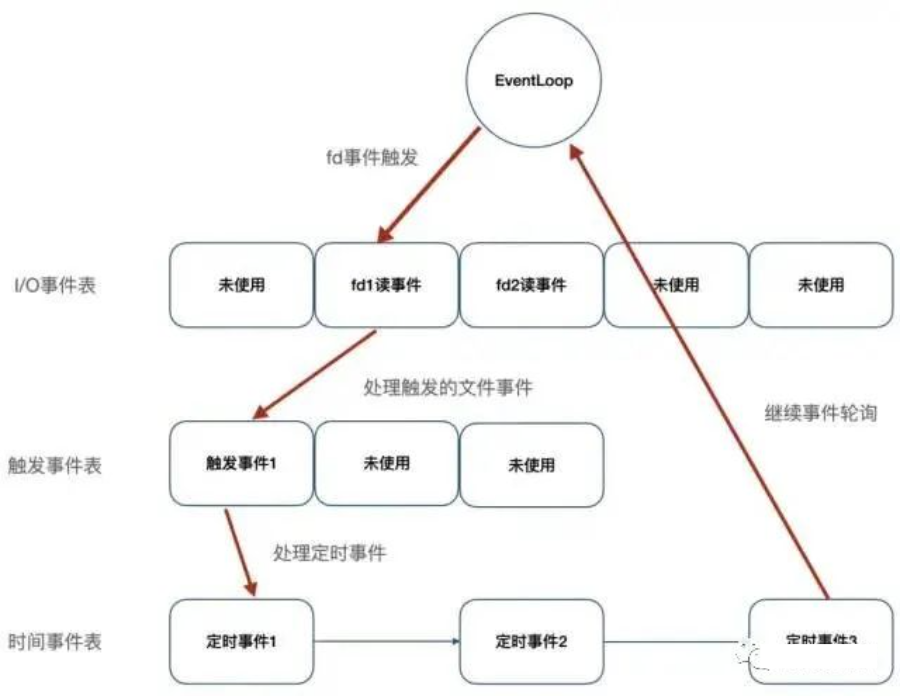

如上圖,Redis將文件事件和時(shí)間事件進(jìn)行抽象,時(shí)間輪訓(xùn)器會監(jiān)聽I/O事件表,一旦有文件事件就緒,Redis就會優(yōu)先處理文件事件,接著處理時(shí)間事件。在上述所有事件處理上,Redis都是以單線程形式處理,所以說Redis是單線程的。

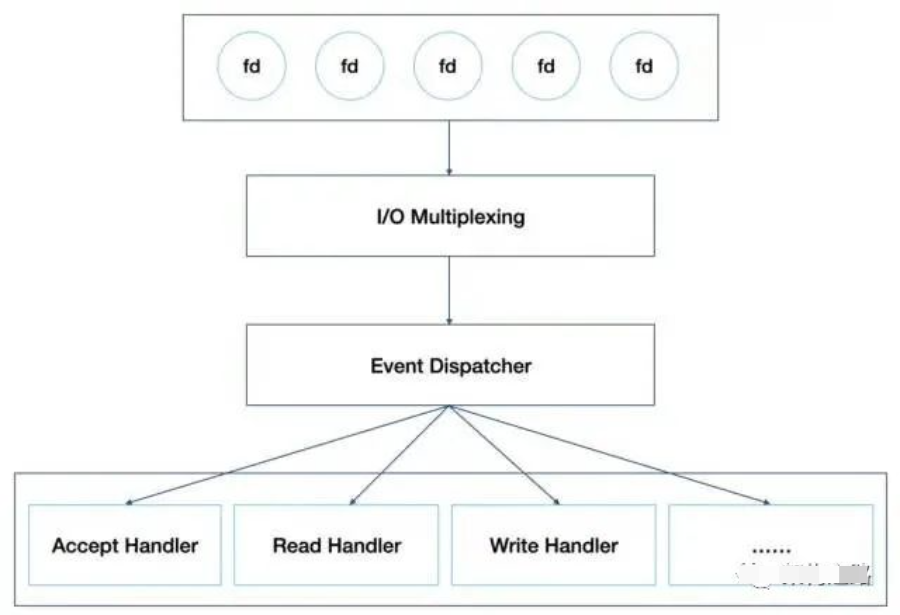

此外,如下圖,Redis基于Reactor模式開發(fā)了自己的I/O事件處理器,也就是文件事件處理器,Redis在I/O事件處理上,采用了I/O多路復(fù)用技術(shù),同時(shí)監(jiān)聽多個(gè)套接字,并為套接字關(guān)聯(lián)不同的事件處理函數(shù),通過一個(gè)線程實(shí)現(xiàn)了多客戶端并發(fā)處理。

正因?yàn)檫@樣的設(shè)計(jì),在數(shù)據(jù)處理上避免了加鎖操作,既使得實(shí)現(xiàn)上足夠簡潔,也保證了其高性能。當(dāng)然,Redis單線程只是指其在事件處理上,實(shí)際上,Redis也并不是單線程的,比如生成RDB文件,就會fork一個(gè)子進(jìn)程來實(shí)現(xiàn),當(dāng)然,這不是本文要討論的內(nèi)容。

Lazy Free機(jī)制

如上所知,Redis在處理客戶端命令時(shí)是以單線程形式運(yùn)行,而且處理速度很快,期間不會響應(yīng)其他客戶端請求,但若客戶端向Redis發(fā)送一條耗時(shí)較長的命令,比如刪除一個(gè)含有上百萬對象的Set鍵,或者執(zhí)行flushdb,flushall操作,Redis服務(wù)器需要回收大量的內(nèi)存空間,導(dǎo)致服務(wù)器卡住好幾秒,對負(fù)載較高的緩存系統(tǒng)而言將會是個(gè)災(zāi)難。為了解決這個(gè)問題,在Redis 4.0版本引入了Lazy Free,將慢操作異步化,這也是在事件處理上向多線程邁進(jìn)了一步。

如作者在其博客中所述,要解決慢操作,可以采用漸進(jìn)式處理,即增加一個(gè)時(shí)間事件,比如在刪除一個(gè)具有上百萬個(gè)對象的Set鍵時(shí),每次只刪除大鍵中的一部分?jǐn)?shù)據(jù),最終實(shí)現(xiàn)大鍵的刪除。但是,該方案可能會導(dǎo)致回收速度趕不上創(chuàng)建速度,最終導(dǎo)致內(nèi)存耗盡。

因此,Redis最終實(shí)現(xiàn)上是將大鍵的刪除操作異步化,采用非阻塞刪除(對應(yīng)命令UNLINK),大鍵的空間回收交由單獨(dú)線程實(shí)現(xiàn),主線程只做關(guān)系解除,可以快速返回,繼續(xù)處理其他事件,避免服務(wù)器長時(shí)間阻塞。

void delCommand(client *c) {

delGenericCommand(c,server.lazyfree_lazy_user_del);

}

/* This command implements DEL and LAZYDEL. */

void delGenericCommand(client *c, int lazy) {

int numdel = 0, j;

for (j = 1; j < c->argc; j++) {

expireIfNeeded(c->db,c->argv[j]);

// 根據(jù)配置確定DEL在執(zhí)行時(shí)是否以lazy形式執(zhí)行

int deleted = lazy ? dbAsyncDelete(c->db,c->argv[j]) :

dbSyncDelete(c->db,c->argv[j]);

if (deleted) {

signalModifiedKey(c,c->db,c->argv[j]);

notifyKeyspaceEvent(NOTIFY_GENERIC,

"del",c->argv[j],c->db->id);

server.dirty++;

numdel++;

}

}

addReplyLongLong(c,numdel);

}

/* Delete a key, value, and associated expiration entry if any, from the DB.

* If there are enough allocations to free the value object may be put into

* a lazy free list instead of being freed synchronously. The lazy free list

* will be reclaimed in a different bio.c thread. */

#define LAZYFREE_THRESHOLD 64

int dbAsyncDelete(redisDb *db, robj *key) {

/* Deleting an entry from the expires dict will not free the sds of

* the key, because it is shared with the main dictionary. */

if (dictSize(db->expires) > 0) dictDelete(db->expires,key->ptr);

/* If the value is composed of a few allocations, to free in a lazy way

* is actually just slower... So under a certain limit we just free

* the object synchronously. */

dictEntry *de = dictUnlink(db->dict,key->ptr);

if (de) {

robj *val = dictGetVal(de);

// 計(jì)算value的回收收益

size_t free_effort = lazyfreeGetFreeEffort(val);

/* If releasing the object is too much work, do it in the background

* by adding the object to the lazy free list.

* Note that if the object is shared, to reclaim it now it is not

* possible. This rarely happens, however sometimes the implementation

* of parts of the Redis core may call incrRefCount() to protect

* objects, and then call dbDelete(). In this case we'll fall

* through and reach the dictFreeUnlinkedEntry() call, that will be

* equivalent to just calling decrRefCount(). */

// 只有回收收益超過一定值,才會執(zhí)行異步刪除,否則還是會退化到同步刪除

if (free_effort > LAZYFREE_THRESHOLD && val->refcount == 1) {

atomicIncr(lazyfree_objects,1);

bioCreateBackgroundJob(BIO_LAZY_FREE,val,NULL,NULL);

dictSetVal(db->dict,de,NULL);

}

}

/* Release the key-val pair, or just the key if we set the val

* field to NULL in order to lazy free it later. */

if (de) {

dictFreeUnlinkedEntry(db->dict,de);

if (server.cluster_enabled) slotToKeyDel(key->ptr);

return 1;

} else {

return 0;

}

}

// 3.2.5版本ZSet節(jié)點(diǎn)實(shí)現(xiàn),value定義robj *obj

/* ZSETs use a specialized version of Skiplists */

typedef struct zskiplistNode {

robj *obj;

double score;

struct zskiplistNode *backward;

struct zskiplistLevel {

struct zskiplistNode *forward;

unsigned int span;

} level[];

} zskiplistNode;

// 6.0.10版本ZSet節(jié)點(diǎn)實(shí)現(xiàn),value定義為sds ele

/* ZSETs use a specialized version of Skiplists */

typedef struct zskiplistNode {

sds ele;

double score;

struct zskiplistNode *backward;

struct zskiplistLevel {

struct zskiplistNode *forward;

unsigned long span;

} level[];

} zskiplistNode;

去掉共享對象,不但實(shí)現(xiàn)了lazy free功能,也為Redis向多線程跨進(jìn)帶來了可能,正如作者所述:

Now that values of aggregated data types are fully unshared, and client output buffers don’t contain shared objects as well, there is a lot to exploit. For example it is finally possible to implement threaded I/O in Redis, so that different clients are served by different threads. This means that we’ll have a global lock only when accessing the database, but the clients read/write syscalls and even the parsing of the command the client is sending, can happen in different threads.

多線程I/O及其局限性

Redis在4.0版本引入了Lazy Free,自此Redis有了一個(gè)Lazy Free線程專門用于大鍵的回收,同時(shí),也去掉了聚合類型的共享對象,這為多線程帶來可能,Redis也不負(fù)眾望,在6.0版本實(shí)現(xiàn)了多線程I/O。

實(shí)現(xiàn)原理

正如官方以前的回復(fù),Redis的性能瓶頸并不在CPU上,而是在內(nèi)存和網(wǎng)絡(luò)上。因此6.0發(fā)布的多線程并未將事件處理改成多線程,而是在I/O上,此外,如果把事件處理改成多線程,不但會導(dǎo)致鎖競爭,而且會有頻繁的上下文切換,即使用分段鎖來減少競爭,對Redis內(nèi)核也會有較大改動,性能也不一定有明顯提升。

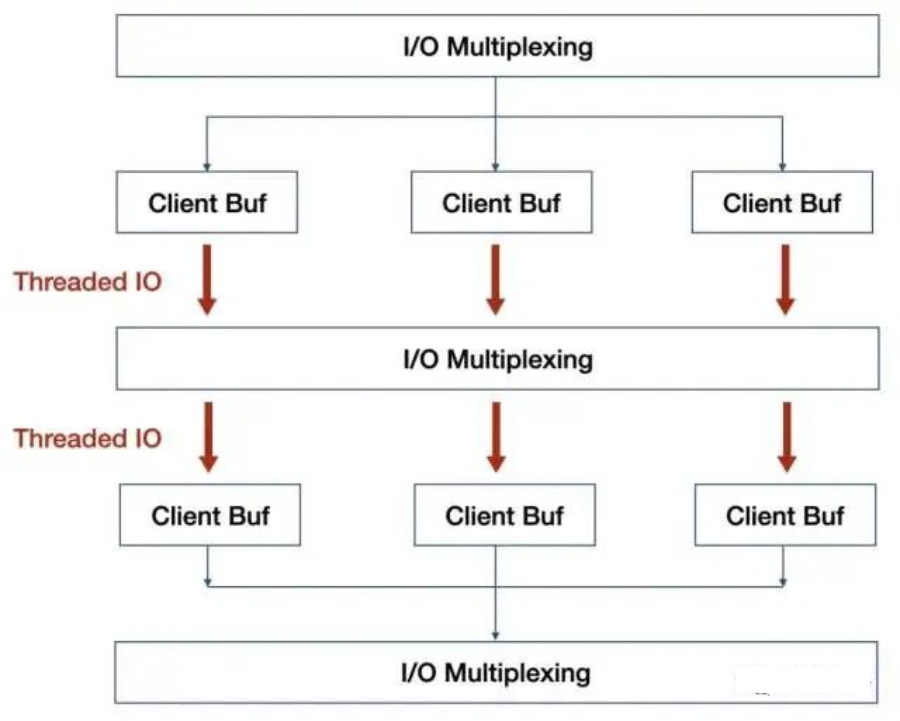

如上圖紅色部分,就是Redis實(shí)現(xiàn)的多線程部分,利用多核來分擔(dān)I/O讀寫負(fù)荷。在事件處理線程每次獲取到可讀事件時(shí),會將所有就緒的讀事件分配給I/O線程,并進(jìn)行等待,在所有I/O線程完成讀操作后,事件處理線程開始執(zhí)行任務(wù)處理,在處理結(jié)束后,同樣將寫事件分配給I/O線程,等待所有I/O線程完成寫操作。

int handleClientsWithPendingReadsUsingThreads(void) {

...

/* Distribute the clients across N different lists. */

listIter li;

listNode *ln;

listRewind(server.clients_pending_read,&li);

int item_id = 0;

// 將等待處理的客戶端分配給I/O線程

while((ln = listNext(&li))) {

client *c = listNodeValue(ln);

int target_id = item_id % server.io_threads_num;

listAddNodeTail(io_threads_list[target_id],c);

item_id++;

}

...

/* Wait for all the other threads to end their work. */

// 輪訓(xùn)等待所有I/O線程處理完

while(1) {

unsigned long pending = 0;

for (int j = 1; j < server.io_threads_num; j++)

pending += io_threads_pending[j];

if (pending == 0) break;

}

...

return processed;

}

void *IOThreadMain(void *myid) {

...

while(1) {

...

// I/O線程執(zhí)行讀寫操作

while((ln = listNext(&li))) {

client *c = listNodeValue(ln);

// io_threads_op判斷是讀還是寫事件

if (io_threads_op == IO_THREADS_OP_WRITE) {

writeToClient(c,0);

} else if (io_threads_op == IO_THREADS_OP_READ) {

readQueryFromClient(c->conn);

} else {

serverPanic("io_threads_op value is unknown");

}

}

listEmpty(io_threads_list[id]);

io_threads_pending[id] = 0;

if (tio_debug) printf("[%ld] Done\n", id);

}

}

局限性

從上面實(shí)現(xiàn)上看,6.0版本的多線程并非徹底的多線程,I/O線程只能同時(shí)執(zhí)行讀或者同時(shí)執(zhí)行寫操作,期間事件處理線程一直處于等待狀態(tài),并非流水線模型,有很多輪訓(xùn)等待開銷。

Tair多線程實(shí)現(xiàn)原理

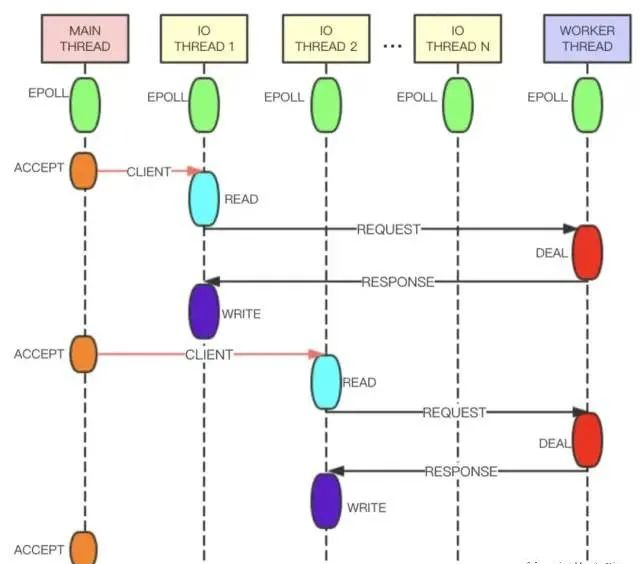

相較于6.0版本的多線程,Tair的多線程實(shí)現(xiàn)更加優(yōu)雅。如下圖,Tair的Main Thread負(fù)責(zé)客戶端連接建立等,IO Thread負(fù)責(zé)請求讀取、響應(yīng)發(fā)送、命令解析等,Worker Thread線程專門用于事件處理。IO Thread讀取用戶的請求并進(jìn)行解析,之后將解析結(jié)果以命令的形式放在隊(duì)列中發(fā)送給Worker Thread處理。Worker Thread將命令處理完成后生成響應(yīng),通過另一條隊(duì)列發(fā)送給IO Thread。為了提高線程的并行度,IO Thread和Worker Thread之間采用無鎖隊(duì)列 和管道 進(jìn)行數(shù)據(jù)交換,整體性能會更好。

小結(jié)

Redis 4.0引入Lazy Free線程,解決了諸如大鍵刪除導(dǎo)致服務(wù)器阻塞問題,在6.0版本引入了I/O Thread線程,正式實(shí)現(xiàn)了多線程,但相較于Tair,并不太優(yōu)雅,而且性能提升上并不多,壓測看,多線程版本性能是單線程版本的2倍,Tair多線程版本則是單線程版本的3倍。在作者看來,Redis多線程無非兩種思路,I/O threading和Slow commands threading,正如作者在其博客中所說:

I/O threading is not going to happen in Redis AFAIK, because after much consideration I think it’s a lot of complexity without a good reason. Many Redis setups are network or memory bound actually. Additionally I really believe in a share-nothing setup, so the way I want to scale Redis is by improving the support for multiple Redis instances to be executed in the same host, especially via Redis Cluster.

What instead I really want a lot is slow operations threading, and with the Redis modules system we already are in the right direction. However in the future (not sure if in Redis 6 or 7) we’ll get key-level locking in the module system so that threads can completely acquire control of a key to process slow operations. Now modules can implement commands and can create a reply for the client in a completely separated way, but still to access the shared data set a global lock is needed: this will go away.

Redis作者更傾向于采用集群方式來解決I/O threading,尤其是在6.0版本發(fā)布的原生Redis Cluster Proxy背景下,使得集群更加易用。

此外,作者更傾向于slow operations threading(比如4.0版本發(fā)布的Lazy Free)來解決多線程問題。后續(xù)版本,是否會將IO Thread實(shí)現(xiàn)的更加完善,采用Module實(shí)現(xiàn)對慢操作的優(yōu)化,著實(shí)值得期待。

推薦閱讀:

世界的真實(shí)格局分析,地球人類社會底層運(yùn)行原理

不是你需要中臺,而是一名合格的架構(gòu)師(附各大廠中臺建設(shè)PPT)

企業(yè)IT技術(shù)架構(gòu)規(guī)劃方案

論數(shù)字化轉(zhuǎn)型——轉(zhuǎn)什么,如何轉(zhuǎn)?

企業(yè)10大管理流程圖,數(shù)字化轉(zhuǎn)型從業(yè)者必備!

【中臺實(shí)踐】華為大數(shù)據(jù)中臺架構(gòu)分享.pdf

華為如何實(shí)施數(shù)字化轉(zhuǎn)型(附PPT)

超詳細(xì)280頁Docker實(shí)戰(zhàn)文檔!開放下載