從0梳理1場NLP賽事!

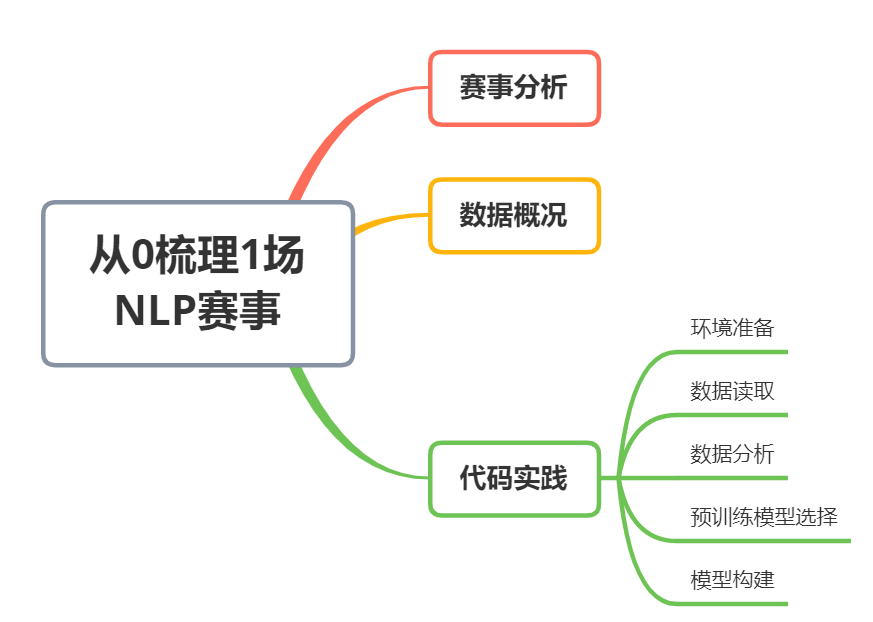

今天以天池中文預(yù)訓(xùn)練模型泛化能力賽事為背景,介紹一種適用入門nlp的Baseline:如何使用bert簡單、快速地完成多任務(wù)多目標(biāo)分類。具體目錄如下:

0. 賽事背景

大賽名稱:全球人工智能技術(shù)創(chuàng)新大賽【熱身賽二】-?中文預(yù)訓(xùn)練模型泛化能力賽事

大賽地址:

https://tianchi.aliyun.com/s/3bd272d942f97725286a8e44f40f3f74(或文末閱讀原文)

大賽類型:自然語言處理、預(yù)訓(xùn)練模型

1. 賽題分析

1.本次賽題為數(shù)據(jù)挖掘類型,通過預(yù)訓(xùn)練模型調(diào)優(yōu)進(jìn)行分類。

2.是一個典型的多任務(wù)多分類問題。

3.主要應(yīng)用keras_bert,以及pandas、numpy、matplotlib、seabon、sklearn、keras等數(shù)據(jù)挖掘常用庫或者框架來進(jìn)行數(shù)據(jù)挖掘任務(wù)。

4.賽題禁止人工標(biāo)注;微調(diào)階段不得使用外部數(shù)據(jù);三個任務(wù)只能共用一個bert;只能單折訓(xùn)練。

2. 數(shù)據(jù)概況

賽題精選了3個具有代表性的任務(wù),要求選手提交的模型能夠同時預(yù)測每個任務(wù)對應(yīng)的標(biāo)簽。數(shù)據(jù)下載地址:https://tianchi.aliyun.com/s/3bd272d942f97725286a8e44f40f3f74

數(shù)據(jù)格式:

任務(wù)1:OCNLI–中文原版自然語言推理,包含3個類別

ocnli_train.head(3)

'''

id content1 content2 label

0 0 一月份跟二月份肯定有一個月份有. 肯定有一個月份有 0

1 1 一月份跟二月份肯定有一個月份有. 一月份有 1

2 2 一月份跟二月份肯定有一個月份有. 一月二月都沒有 2

'''

len(ocnli_train['label'].unique())

#3

任務(wù)2:OCEMOTION–中文情感分類,包含7個類別

ocemo_train.head(3)

'''

id content label

0 0 '你知道多倫多附近有什么嗎?哈哈有破布耶...真的書上寫的你聽哦...你家那塊破布是世界上最... sadness

1 1 平安夜,圣誕節(jié),都過了,我很難過,和媽媽吵了兩天,以死相逼才終止戰(zhàn)爭,現(xiàn)在還處于冷戰(zhàn)中。 sadness

2 2 我只是自私了一點(diǎn),做自己想做的事情! sadness

'''

len(ocemo_train['label'].unique())

#7

任務(wù)3:TNEWS–今日頭條新聞標(biāo)題分類,包含15個類別

times_train.head(3)

'''

id content label

0 0 上課時學(xué)生手機(jī)響個不停,老師一怒之下把手機(jī)摔了,家長拿發(fā)票讓老師賠,大家怎么看待這種事? 108

1 1 商贏環(huán)球股份有限公司關(guān)于延期回復(fù)上海證券交易所對公司2017年年度報告的事后審核問詢函的公告 104

2 2 通過中介公司買了二手房,首付都付了,現(xiàn)在賣家不想賣了。怎么處理? 106

'''

len(times_train['label'].unique())

#15

3. 代碼實(shí)踐

Step 1:環(huán)境準(zhǔn)備

導(dǎo)入相關(guān)包

import pandas as pd

import codecs, gc

import numpy as np

from sklearn.model_selection import KFold

from keras_bert import load_trained_model_from_checkpoint, Tokenizer

from keras.metrics import top_k_categorical_accuracy

from keras.layers import *

from keras.callbacks import *

from keras.models import Model

import keras.backend as K

from keras.optimizers import Adam

from keras.utils import to_categorical

from sklearn.preprocessing import LabelEncoder

如果在google colab上運(yùn)行代碼,需要先將數(shù)據(jù)上傳至driver上。執(zhí)行以下代碼掛在driver并配置相關(guān)環(huán)境。

from google.colab import drive

drive.mount('/content/drive')

'''

路徑說明:

../code #保存代碼

../data #保存數(shù)據(jù)

../subs #保存數(shù)據(jù)

../chinese_roberta_wwm_large_ext_L-24_H-1024_A-16 #bert路徑

'''

pip install keras-bert

Step 2:數(shù)據(jù)讀取

path = "/content/drive/My Drive/天池nlp預(yù)訓(xùn)練/"

#將ocnli中content1[0:maxlentext1]+content2作為ocnli任務(wù)的content

times_train = pd.read_csv(path + '/data/TNEWS_train1128.csv', sep='\t', header=None, names=('id', 'content', 'label')).astype(str)

ocemo_train = pd.read_csv(path + '/data/OCEMOTION_train1128.csv',sep='\t', header=None, names=('id', 'content', 'label')).astype(str)

ocnli_train = pd.read_csv(path + '/data/OCNLI_train1128.csv', sep='\t', header=None, names=('id', 'content1', 'content2', 'label')).astype(str)

ocnli_train['content'] = ocnli_train['content1'] + ocnli_train['content2']#.apply( lambda x: x[:maxlentext1] )

times_testa = pd.read_csv(path + '/data/TNEWS_a.csv', sep='\t', header=None, names=('id', 'content')).astype(str)

ocemo_testa = pd.read_csv(path + '/data/OCEMOTION_a.csv',sep='\t', header=None, names=('id', 'content')).astype(str)

ocnli_testa = pd.read_csv(path + '/data/OCNLI_a.csv', sep='\t', header=None, names=('id', 'content1', 'content2')).astype(str)

ocnli_testa['content'] = ocnli_testa['content1']+ ocnli_testa['content2']#.apply( lambda x: x[:maxlentext1] )

1) 數(shù)據(jù)集合并

分別將三個任務(wù)的content、label列按行concat在一起作為訓(xùn)練集和標(biāo)簽、測試集,以此簡單地將三任務(wù)轉(zhuǎn)化為單任務(wù)。

#合并三個任務(wù)的訓(xùn)練、測試數(shù)據(jù)

train_df = pd.concat([times_train, ocemo_train, ocnli_train[['id','content', 'label']]], axis=0).copy()

testa_df = pd.concat([times_testa, ocemo_testa, ocnli_testa[['id', 'content']]], axis=0).copy()

2)標(biāo)簽編碼

#LabelEncoder處理標(biāo)簽,因?yàn)閎ert輸入的label需要從0開始

encode_label = LabelEncoder()

train_df['label'] = encode_label.fit_transform(train_df['label'].apply(str))

3) 數(shù)據(jù)信息查看

train_df.info()

'''

Int64Index: 147453 entries, 0 to 48777

Data columns (total 3 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 id 147453 non-null object

1 content 147453 non-null object

2 label 147453 non-null int64

dtypes: int64(1), object(2)

memory usage: 4.5+ MB

'''

數(shù)據(jù)為id、content、label三列,無子句為空的行。

Step 3: 數(shù)據(jù)分析(EDA)

1) 子句長度統(tǒng)計分析

統(tǒng)計子句長度主要用于設(shè)置輸入bert的序列長度。

times_train['content'].str.len().describe(percentiles=[.95, .98, .99])\

,ocemo_train['content'].str.len().describe(percentiles=[.95, .98, .99])\

,ocnli_train['content1'].str.len().describe(percentiles=[.95, .98, .99])\

,ocnli_train['content2'].str.len().describe(percentiles=[.95, .98, .99])

'''

(count 63360.000000

mean 22.171086

std 7.334206

min 2.000000

50% 22.000000

95% 33.000000

98% 37.000000

99% 39.000000

max 145.000000

Name: content, dtype: float64, count 35315.000000

mean 48.214328

std 84.391942

min 3.000000

50% 34.000000

95% 134.000000

98% 138.000000

99% 142.000000

max 12326.000000

Name: content, dtype: float64, count 48778.000000

mean 24.174607

std 11.515428

min 8.000000

50% 22.000000

95% 46.000000

98% 49.000000

99% 50.000000

max 50.000000

Name: content1, dtype: float64, count 48778.000000

mean 15.828529

std 977.396848

min 2.000000

50% 10.000000

95% 21.000000

98% 24.000000

99% 27.000000

max 215874.000000

Name: content2, dtype: float64)

'''

從上可以看出,當(dāng)設(shè)置bert序列長度為142時即可覆蓋約99%子句的全部內(nèi)容。

2)統(tǒng)計標(biāo)簽的基本分布信息

train_df['label'].value_counts() / train_df.shape[0]

'''

1 0.113467

0 0.109940

17 0.107397

23 0.084603

21 0.060318

10 0.047771

6 0.041749

4 0.039918

13 0.039036

8 0.033292

3 0.032668

5 0.032268

11 0.029487

19 0.029481

9 0.027690

18 0.027588

12 0.027541

16 0.027460

22 0.027412

15 0.022923

7 0.016853

2 0.008993

24 0.006097

20 0.004001

14 0.002048

Name: label, dtype: float64

'''

由上可以看出,標(biāo)簽占比差距非常大。在拆分訓(xùn)練集與驗(yàn)證集時如果簡單地采用隨機(jī)拆分,可能會導(dǎo)致驗(yàn)證集不存在部分標(biāo)簽的情況。

Step 4: 預(yù)訓(xùn)練模型選擇

1)模型選擇

在眾多nlp預(yù)訓(xùn)練模型中,本文baseline選擇了哈工大與訊飛聯(lián)合發(fā)布的基于全詞遮罩(Whole Word Masking)技術(shù)的中文預(yù)訓(xùn)練模型:RoBERTa-wwm-ext-large。點(diǎn)擊以下鏈接了解更多詳細(xì)信息:

論文地址:https://arxiv.org/abs/1906.08101

開源模型地址:https://github.com/ymcui/Chinese-BERT-wwm

哈工大訊飛聯(lián)合實(shí)驗(yàn)室的項(xiàng)目介紹:https://mp.weixin.qq.com/s/EE6dEhvpKxqnVW_bBAKrnA

2)調(diào)優(yōu)參數(shù)配置

為方便調(diào)優(yōu),在同一代碼塊中配置調(diào)優(yōu)的參數(shù)。

#一些調(diào)優(yōu)參數(shù)

er_patience = 2 #early_stopping patience

lr_patience = 5 #ReduceLROnPlateau patience

max_epochs = 2 #epochs

lr_rate = 2e-6#learning rate

batch_sz = 4 #batch_size

maxlen = 256 #設(shè)置序列長度為,base模型要保證序列長度不超過512

lr_factor = 0.85 #ReduceLROnPlateau factor

maxlentext1 = 200 #選擇ocnli子句一的長度

n_folds = 10 #設(shè)置驗(yàn)證集的占比:1/n_folds

Step 5: 模型構(gòu)建

1)切分?jǐn)?shù)據(jù)集(Train,Val)進(jìn)行模型訓(xùn)練、評價

采用StratifiedKFold分層抽樣抽取10%的訓(xùn)練數(shù)據(jù)作為驗(yàn)證集。

###采用分層抽樣的方式,從訓(xùn)練集中抽取10%作為驗(yàn)證機(jī)

from sklearn.model_selection import StratifiedKFold

skf = StratifiedKFold(n_splits=n_folds, shuffle=True, random_state=222)

X_trn = pd.DataFrame()

X_val = pd.DataFrame()

for train_index, test_index in skf.split(train_df.copy(), train_df['label']):

X_trn, X_val = train_df.iloc[train_index], train_df.iloc[test_index]

break#不能多折訓(xùn)練

采用f1值做為評價指標(biāo),當(dāng)評價指標(biāo)不在提升時,降低學(xué)習(xí)率。

from keras import backend as K

def f1(y_true, y_pred):

def recall(y_true, y_pred):

"""Recall metric.

Only computes a batch-wise average of recall.

Computes the recall, a metric for multi-label classification of

how many relevant items are selected.

"""

true_positives = K.sum(K.round(K.clip(y_true * y_pred, 0, 1)))

possible_positives = K.sum(K.round(K.clip(y_true, 0, 1)))

recall = true_positives / (possible_positives + K.epsilon())

return recall

def precision(y_true, y_pred):

"""Precision metric.

Only computes a batch-wise average of precision.

Computes the precision, a metric for multi-label classification of

how many selected items are relevant.

"""

true_positives = K.sum(K.round(K.clip(y_true * y_pred, 0, 1)))

predicted_positives = K.sum(K.round(K.clip(y_pred, 0, 1)))

precision = true_positives / (predicted_positives + K.epsilon())

return precision

precision = precision(y_true, y_pred)

recall = recall(y_true, y_pred)

return 2*((precision*recall)/(precision+recall+K.epsilon()))

2)構(gòu)造輸入bert的數(shù)據(jù)格式

#標(biāo)簽類別個數(shù)

n_cls = len( train_df['label'].unique() )

#訓(xùn)練數(shù)據(jù)、測試數(shù)據(jù)和標(biāo)簽轉(zhuǎn)化為模型輸入格式

#訓(xùn)練集每行的content、label轉(zhuǎn)為tuple存入list,再轉(zhuǎn)為numpy array

TRN_LIST = []

for data_row in X_trn.iloc[:].itertuples():

TRN_LIST.append((data_row.content, to_categorical(data_row.label, n_cls)))

TRN_LIST = np.array(TRN_LIST)

#驗(yàn)證集每行的content、label轉(zhuǎn)為tuple存入list,再轉(zhuǎn)為numpy array

VAL_LIST = []

for data_row in X_val.iloc[:].itertuples():

VAL_LIST.append((data_row.content, to_categorical(data_row.label, n_cls)))

VAL_LIST = np.array(VAL_LIST)

#測試集每行的content、label轉(zhuǎn)為tuple存入list,再轉(zhuǎn)為numpy array,其中l(wèi)abel全為0

DATA_LIST_TEST = []

for data_row in testa_df.iloc[:].itertuples():

DATA_LIST_TEST.append((data_row.content, to_categorical(0, n_cls)))

DATA_LIST_TEST = np.array(DATA_LIST_TEST)

3)模型搭建

在bert后接一層Lambda層取出[CLS]對應(yīng)的向量,再接一層Dense層用于分類輸出。

#bert模型設(shè)置

def build_bert(nclass):

global lr_rate

bert_model = load_trained_model_from_checkpoint(config_path, checkpoint_path, seq_len=None) #加載預(yù)訓(xùn)練模型

for l in bert_model.layers:

l.trainable = True

x1_in = Input(shape=(None,))

x2_in = Input(shape=(None,))

x = bert_model([x1_in, x2_in])

x = Lambda(lambda x: x[:, 0])(x) #取出[CLS]對應(yīng)的向量用來做分類

p = Dense(nclass, activation='softmax')(x) #直接dense層softmax輸出

model = Model([x1_in, x2_in], p)

model.compile(loss='categorical_crossentropy',

optimizer=Adam(lr_rate), #選擇優(yōu)化器并設(shè)置學(xué)習(xí)率

metrics=['accuracy', f1])

print(model.summary())

return model

4)模型訓(xùn)練

使用google colab 上的V100卡訓(xùn)練一個epoch需要約1.5小時,跑兩個epoch即可。

#模型訓(xùn)練函數(shù)

def run_nocv(nfold, trn_data, val_data, data_labels, data_test, n_cls):

global er_patience

global lr_patience

global max_epochs

global f1metrics

global lr_factor

test_model_pred = np.zeros((len(data_test), n_cls))

model = build_bert(n_cls)

#下行代碼用于加載保存的權(quán)重繼續(xù)訓(xùn)練

#model.load_weights(path + '/subs/model.epoch01_val_loss0.9911_val_acc0.6445_val_f10.6276.hdf5')

early_stopping = EarlyStopping(monitor= "val_f1", patience=er_patience) #早停法,防止過擬合 #'val_accuracy'

plateau = ReduceLROnPlateau(monitor="val_f1", verbose=1, mode='max', factor=lr_factor, patience=lr_patience) #當(dāng)評價指標(biāo)不在提升時,降低學(xué)習(xí)率

checkpoint = ModelCheckpoint(path + "/subs/model.epoch{epoch:02d}_val_loss{val_loss:.4f}_val_acc{val_accuracy:.4f}_val_f1{val_f1:.4f}.hdf5", monitor="val_f1", verbose=2, save_best_only=True, mode='max', save_weights_only=True) #保存val_f1最好的模型權(quán)重

#訓(xùn)練跟驗(yàn)證集可shuffle打亂,測試集不可打亂(否則在生成結(jié)果文件的時候沒法跟ID對應(yīng)上)

train_D = data_generator(trn_data, shuffle=True)

valid_D = data_generator(val_data, shuffle=True)

test_D = data_generator(data_test, shuffle=False)

#模型訓(xùn)練

model.fit_generator(

train_D.__iter__(),

steps_per_epoch=len(train_D),

epochs=max_epochs,

validation_data=valid_D.__iter__(),

validation_steps=len(valid_D),

callbacks=[early_stopping, plateau, checkpoint],

)

#模型預(yù)測

test_model_pred = model.predict_generator(test_D.__iter__(), steps=len(test_D), verbose=1)

train_model_pred = test_model_pred#model.predict(train_D.__iter__(), steps=len(train_D), verbose=1)

del model

gc.collect() #清理內(nèi)存

K.clear_session() #clear_session就是清除一個session

return test_model_pred, train_model_pred

調(diào)用上述函數(shù)進(jìn)行訓(xùn)練與預(yù)測。

cvs = 1

#輸出為numpy array格式的25列概率

test_model_pred, train_model_pred = run_nocv(cvs, TRN_LIST, VAL_LIST, None, DATA_LIST_TEST, n_cls)

5)輸出結(jié)果

#將結(jié)果轉(zhuǎn)為DataFrame格式

preds_tst_df = pd.DataFrame(test_model_pred)

#再將range(0,25)做encode_label逆變換作為該DataFrame的列名

preds_col_names = encode_label.inverse_transform( range(0,n_cls) )

preds_tst_df.columns = preds_col_names

#從每個任務(wù)對應(yīng)的概率標(biāo)簽列中找出最大的概率對應(yīng)的列名作為預(yù)測結(jié)果

'''

如ocnli任務(wù)的預(yù)測結(jié)果只能為0、1、2,那么從preds_tst_df中選擇0-1-2三列中每行概率最大的列名作為ocnli任務(wù)的測試集預(yù)測結(jié)果,其它兩個任務(wù)依此類推。

'''

times_preds = preds_tst_df.head(times_testa.shape[0])[times_train['label'].unique().tolist()]

times_preds = times_preds.eq(times_preds.max(1), axis=0).dot(times_preds.columns)

ocemo_preds = preds_tst_df.head(times_testa.shape[0] + ocemo_testa.shape[0]).tail(ocemo_testa.shape[0])[ocemo_train['label'].unique().tolist()]

ocemo_preds = ocemo_preds.eq(ocemo_preds.max(1), axis=0).dot(ocemo_preds.columns)

ocnli_preds = preds_tst_df.tail(ocnli_testa.shape[0])[ocnli_train['label'].unique().tolist()]

ocnli_preds = ocnli_preds.eq(ocnli_preds.max(1), axis=0).dot(ocnli_preds.columns)

#輸出任務(wù)tnews的預(yù)測結(jié)果

times_sub = times_testa[['id']].copy()

times_sub['label'] = times_preds.values

times_sub.to_json(path + "/subs/tnews_predict.json", orient='records', lines=True)

#輸出任務(wù)ocemo的預(yù)測結(jié)果

ocemo_sub = ocemo_testa[['id']].copy()

ocemo_sub['label'] = ocemo_preds.values

ocemo_sub.to_json(path + "/subs/ocemotion_predict.json", orient='records', lines=True)

#輸出任務(wù)ocnli的預(yù)測結(jié)果

ocnli_sub = ocnli_testa[['id']].copy()

ocnli_sub['label'] = ocnli_preds.values

ocnli_sub.to_json(path + "/subs/ocnli_predict.json", orient='records', lines=True)

6)線上評分

第一階段線上評分:0.6342。

希望能幫助你完整實(shí)踐一場NLP賽事。